The rate-limiting steps of drug discovery are not just chemistry problems

There are terms used in chemistry that could help the lay public out quite a bit, if only they knew them. One that I’ve seen many non-chemists applaud is the concept of a ‘rate-limiting step’. We chemists are used to thinking of reaction mechanisms with several discrete stages, and realising that they don’t all run with equal facility. One particular part of the process (a bond rotation, a rearrangement, an anion formation or whatever) will turn out to be the bottleneck for the whole thing. Nothing you do will speed up the overall reaction unless it addresses that particular slowdown.

People recognise this concept, even if they haven’t thought about it in quite those terms. But sometimes, as a drug discovery chemist, I wonder if I recognise it myself. The whole process of drug discovery has a great many steps in it, and there is no doubt that some of them are slower and more difficult than others. What I would like to remind people (myself included) is that none of the true rate-limiting steps in drug discovery have much to do with chemistry.

I realise I may sound as if I’m trying to shift the blame. Or I might even sound like a bit of a heretic – particularly if you’re used to thinking of the chemists doing hit-to-lead work, evaluating a big list of chemotypes from the primary screen and seeing which ones are actually real results. Or if you’re picturing the project team, synthesising analogue after analogue, trying to optimise all the properties needed for a clinical candidate, all at the same time. These certainly do take substantial work; I’m not trying to minimise that. Clinical trials, though, almost invariably take more time. And they certainly cost more money.

What’s more, they have a much higher probability of giving you nothing at all for all that effort. Some med-chem teams may never find a compound that’s worth taking to the clinic, but I’d say that the majority will eventually. Whereas about 90% of all clinical trials fail. This sad and brutal statistic is the one thing I’d point to as an overall rate-limiting step for the total number of new drugs making it to market. If you want to increase the industry’s productivity, find a way to have us wipe out only eight times out of ten instead of the full nine. Looking more closely, those failures are almost entirely due to lack of efficacy (because the drug didn’t work as well as was hoped) or to toxicity (because it turned out to do something else as well). And neither of those are chemistry problems.

That’s too bad for me, because most of the ideas I seem to get are broadly chemistry-related. I get inspiration about ways to make compounds (or libraries of them), or ways to assay them, or to generate higher quality lead compounds as starting points, etc. Those can be quite interesting (well, to me!) and they’re certainly not useless, but in the grand drug discovery scheme of things they’re fixing the wrong problems. I’m not alone in this, nowhere near: all those people touting automated synthesis and purification, machine-learning-based retrosynthesis software, or new DNA-encoded library reactions and suchlike are doing the same thing. We’re all thinking up ways for projects to more quickly and efficiently get up to the point where they can slam into the real problems with this business.

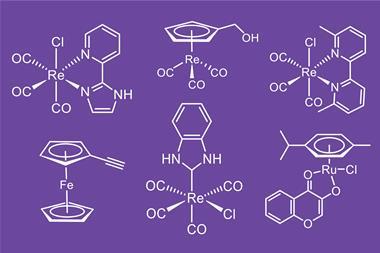

How would one go about fixing those, then? Well, as far as I (or anyone else) can see, it comes down to knowing and understanding a lot more biology than we do now. We’re not talking just about optimisation, either. Some of that biology is not even discovered yet – I feel safe saying that there are profoundly important things going on inside cells that we haven’t even recognised yet. So I think that helping to apply what we chemists know to the job of uncovering these mysteries is a worthwhile endeavor, and it’s why the field I identify with most has changed over the years from organic chemistry, to medicinal chemistry, to chemical biology. It’s all been a search for those rate-limiting steps.

No comments yet