Study reveals possible link between obfuscation and massaged data in retracted papers

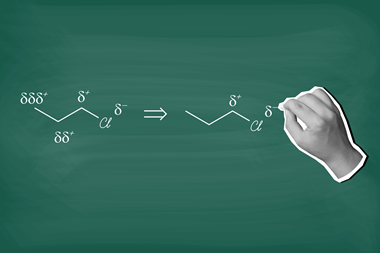

Scientists who manipulate or falsify data may be masking their results behind excess jargon in published papers, according to a team of researchers at Stanford University in the US.

Jeff Hancock, a communications psychologist, and his student David Markowitz trawled through the life sciences paper repository PubMed for publications that had been retracted due to fraudulent data. They found 253 such studies between the years 1973 and 2013, and looked at third-person pronoun use and linguistic obfuscation – both widely-acknowledged indicators lying – using a ‘obfuscation index’ rating system.

The researchers found a 1.5% average increase in jargon in the retracted papers when compared with 253 sound studies from the same period and 62 papers retracted for reasons other than fraud. They also noted that there is a positive relationship between linguistic deception and the number of references per paper, suggesting authors will attempt to cover their fraud by increasing the cost of assessing a suspicious paper.

Speaking to Stanford News, Hancock suggested that software capable of rooting out such obfuscation and fraud in submitted papers may be an effective tool for journal editors. But he asserted that such a platform would need extensive development to reduce the false positive rate and may even undermine trust in the academic community.

References

D M Markowitz and J T Hancock, J. Lang. Soc. Psychol., 2015, DOI: 10.1177/0261927X15614605

No comments yet