Experimenting with better ways to assess researchers

In 2014, University of Cambridge computer scientist Neil Lawrence was asked to chair the annual machine learning and computational neuroscience conference known as EuroNIPS (Neural Information Processing Systems). Less than a quarter of papers submitted for review gained a presenting spot, which is typical for this type of prestigious meeting, in a field where conference proceedings are the common mode of publishing results. Co-chair, Google Research VP Corinna Cortes, suggested they take the opportunity to experiment with the peer review process and see what level of consistency it provides. Peer review is considered a ‘gold standard’ in science and generally receives widespread support from researchers, but there is evidence that it may not always be an impartial arbiter of quality. The EuroNIPS conference provided an opportunity to investigate this further.

Lawrence and Cortes convened two reviewing panels and 10% of the papers submitted – 166 papers – were reviewed by both groups. Ultimately 43 of these papers received a different decision from each panel, with the committees disagreeing on 57% of the 38 papers that were ultimately accepted for presentation. The results were slightly better than random (where you would expect disagreement on 77.5% of accepted papers) – but is this good enough for what could have been a career-defining decision for a young researcher?

Other studies show similar inconsistencies. In 2018, 43 reviewers were given the same 25 anonymous US National Institute of Health grant applications to review and showed no agreement in their quantitative or qualitative evaluations. The study concluded that two randomly selected ratings of the same application were on average just as similar as two randomly selected ratings of different applications.1

Perhaps it is unreasonable to expect high levels of consistency from peer review. As Lawrence states, ‘you are sampling from three people who are not objective … they’ve got particular opinions’. This may not have once been a problem, but the current competitive nature of academia has made each peer reviewed decision highly significant. ‘If you have a funding rate of 5%, or 10%, you’re going to have very few winners and a lot of losers, and a lot of undeserving losers and some undeserving winners,’ says Johan Bollen, an expert in complex systems from Indiana University Bloomington, US, who has been investigating alternative models for distributing funding.

Differences stack up through the whole publication process

Aileen Day, Royal Society of Chemistry

Lawrence agrees that the root of the problem is how career-defining peer reviewed decisions can be. ‘Whether [a student] managed to get [their] paper [into the EuroNIPS conference] shouldn’t be the be-all-and-end all … unfortunately, it often is.’

For grant allocation there is the added issue of the amount of time being wasted writing and peer reviewing ultimately unfunded proposals. One estimation based on Australian researchers suggested preparing the 3,700 proposals they collectively submitted in a year represented five centuries worth of research time.2 But Bollen says this is not to criticise funding agencies. ‘A lot of really good people are involved … but a system that funds only 15% of the applicants, leaving 85% with zero money, cannot be efficient.’

Unconscious bias

Another charge levied at peer review is that it suffers from the unconscious biases reviewers undoubtedly carry with them. These learned stereotypes are unintentional, but deeply ingrained and able to influence decisions. In 2019 the Royal Society of Chemistry (RSC) produced a report looking at the gender gap in the success of submissions to their journals from 2014–2018. RSC data scientist Aileen Day found differences existed in all stages, including peer review.3 ‘It was small, but it was significant,’ says Day. For example, while 23.9% of corresponding authors who submit papers are female, only 22.9% of papers accepted for publication have female corresponding authors. ‘The important thing is differences stack up through the whole publication process,’ explains Day.

The study also found that men and women behave differently as reviewers; ‘If you were a woman you were more likely to say major revisions, if you were a man, reject,’ says Day. Reviewers also give preferential treatment to their own gender.

To combat unconscious biases the RSC has released A Framework for Action in Scientific Publishing, which provides steps that editorial boards and staff can take to make publishing more inclusive. In July, other publishers, including the American Chemical Society and Elsevier, agreed to join the RSC in a commitment to monitoring and reducing bias in science publishing. The group, representing more than 7000 journals, has agreed to pool resources and data, and to work towards an appropriate representation of authors, reviewers and editorial decision-makers. The working group will collaborate on policy development, good practices related to diversity data collection and sharing lessons learned from trialling new processes.

Journals are clearly trying to ensure a better gender balance of reviewers and many institutions provide training for staff and reviewers to overcome unconscious biases , but there are differences in opinions on the effectiveness of these measures. A recent report by the professional HR body the Chartered Institute of Personnel and Development highlighted the ‘extremely limited’ evidence that training could change employee behaviour.

I figured, why don’t we just write everybody a cheque?

Johan Bollen, Indiana University Bloomington, US

One idea for preventing bias is to hide the identity of the author of the paper or proposal, something the Engineering and Physical Sciences Research Council (EPSRC) have looked at. ‘We have trialled a number of novel approaches to peer review over the years including those involving anonymous or double-blind peer review,’ says head of business improvement Louise Tillman. But many reviewers say in relatively small academic communities it’s difficult to ensure anonymity .

At the opposite end of the spectrum some publications have gone for open peer review. For example, in February Nature announced it was offering the option of having reports from referees (who can still chose to stay anonymous) published alongside author responses . ‘In an ideal world, you might want the name of the reviewers to be open as well, but there’s a challenge there,’ says Lawrence, ‘[referees] might be unwilling to share a forthright opinion.’ The machine learning journal edited by Lawrence publishes peer reviews alongside papers. ‘That may have been my favourite innovation to come from the EuroNIPS experiment,’ he notes.

Some subjects seem to be slower to change than others. There are few examples of open review chemistry journals, for example, though there are signs of movement here recently – the RSC’s two newest journals offer authors the option of transparent peer review (publishing their paper’s peer review reports). Lawrence says the slow pace may relate to chemistry publishing being dominated by large society publishers. ‘Communities that have struggled are those that have professional institutions managing their reviewing,’ suggests Lawrence, who sees these types of organisation as slow to change.

Funding lottery

If peer review is such a lottery, why not actually replace it with a lottery? Several funding bodies have trialled such systems. From 2013 the New Zealand Health Research Council has awarded its ‘explorer grants ’ worth NZ$150,000 (£76,000) using a random number generator to select from all applications that were verified to meet its criteria . Several other funders have tested the idea: the Swiss National Science Foundation experimented with random selection in 2019 , drawing lots to select postdoctoral fellowships, and Germany’s Volkswagen Foundation has also used lotteries to allocate grants since 2017. Another, perhaps less brutal model, though not yet tested, suggests that applications not funded go back into the pot – creating a system more like premium bonds.4

A recent review of the New Zealand scheme, which had a 14% acceptance rate overall, showed 63% of applicants who responded to a survey were in favour of the lottery, and 25% against. Perhaps not surprisingly, support was higher among those who won! But respondents also reported that the system did not reduce the time they spent preparing their applications as they still needed to pass an initial quality threshold to enter the lottery.5

Such systems ‘uniquely embody the worst of all possible worlds,’ says Bollen. ‘It’s essentially funding agencies and scientists saying we cannot do decent [peer] review… its almost spiteful.’

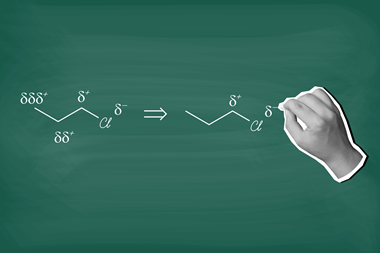

Bollen has come up with another idea for funding researchers: ‘I figured, why don’t we just write everybody a cheque?’ In 2019, inspired by mathematical models used in internet search engines, he published his idea for a ‘self-organised fund allocation’ system, where every scientist periodically receives an equal, unconditional amount of funding. The catch is that they must then anonymously donate a given fraction of this to other scientists who are not collaborators or from the same institution.6 Those scientists would then re-distribute a portion of what they receive. Bollen says the model ‘converges on a distribution and allocation of funding that, overall, reflects the preferences of everybody in that community collectively’ – perhaps the ultimate peer review. ‘The results could be just as good, just as fair as the systems that we have now, but without all of the overhead,’ he adds.

Tweaking the model could help solve current issues of bias, for example by mandating researchers to give a certain proportion of their funds to underrepresented groups. Of course such a system might favour academics who ‘talk a good game’ and disadvantage those in obscure fields, but that’s already the case in the present system, says Bollen. So far his model has had no takers, but has received lots of interest from colleagues and funding bodies. He is hopeful that after the current period of upheaval there may be an appetite for change.

Lawrence thinks we may just need to accept that peer review is always going to have flaws; ‘the idea that there’s a perfect, noise free system is the worst mistake.’ A number of recent well-publicised retractions, including a Lancet paper on Covid-19 hydroxychloroquine treatment, show that peer review is not error-proof. According to the website Retraction Watch at least 118 chemistry papers were retracted in 2019. Ultimately we may need to be realistic about what we mean by ‘gold standard’. ‘[Peer review] may be the best system we have for verifying research, but [that doesn’t always] mean [that] the research that’s rejected is somehow flawed or the research accepted is somehow brilliant,’ says Lawrence.

This article was updated on 26 August 2020. An earlier version referred to a comparison of publishing data from different countries, which had not been verified, and Aileen Day’s quoted use of the word ‘bias’ has been clarified to ‘differences’.

References

1 E L Pier et al, PNAS, 2018, 115, 2952 (DOI: 10.1073/pnas.1714379115)

2 D L Herbert, A G Barnett and N Graves, Nature, 2013, 495, 314 (DOI: 10.1038/495314d)

3 A E Day, P Corbett and J Boyle, Chem. Sci., 2020, 11, 2277 (DOI: 10.1039/C9SC04090K)

4 F C Fang and A Casadevall, mBio, 2016, 7, e00422 (DOI: 10.1128/mBio.00422-16)

5 M Liu et al, Res. Integr. Peer Rev., 2020, 5, 3 (DOI: 10.1186/s41073-019-0089-z)

6 J Bollen et al, Ecol. Soc., 2019, 24, 29 (DOI: 10.5751/ES-11005-240329)

No comments yet