Research papers that list Chinese institutions account for more than half of the retractions across 10 academic publishers, according to a new large-scale analysis.

The study, which has not been peer reviewed yet, was published on arXiv last month and examined 46,000 retractions issued by scholarly journals between 1997 and 2026 that were indexed by the Retraction Watch Database.

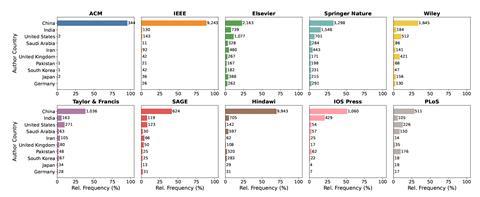

According to the study, there were 29,867 Chinese affiliations listed on these retractions – more than 91% of which don’t list international collaborators. Researchers in China produced 16.5% of all research output during that time period, the study found, despite the country’s institutions being listed on more than 52% of retracted papers in the sample.

Following China, institutions based in India, the US and Saudi Arabia feature on 7.25%, 5.72% and 2.83% of retractions, respectively.

The study also examined the differences between retraction practices at the 10 largest academic publishers. Nearly one in four retractions in the database were issued by journals published by Hindawi, which had a retraction rate of around 320 papers per every 10,000 published. In 2021, publishing giant John Wiley & Sons acquired around 200 Hindawi journals but later decided to drop the Hindawi brand name after significant financial losses.

IOS Press follows Hindawi with a retraction rate of 284 retractions per 10,000 papers published, the analysis found.

The Institute of Electrical and Electronics Engineers (IEEE) has the second highest absolute number of retractions at 10,094 – just under 22% of the total sample – followed by Springer Nature, which has 7534, which is 16.3% of the total.

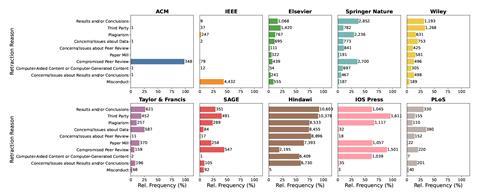

The reasons for retractions also vary significantly between publishers. While the vast majority of retractions by the IEEE are due to misconduct, almost all the retractions issued by the Association of Computing Machinery (ACM) are a result of compromised peer review.

While compromised peer review also features as the most likely reason for Sage to retract a paper, it was the least likely reason for Hindawi to pull manuscripts.

All publishers except the IEEE and the ACM were significantly more likely to retract research articles than other article types; the IEEE and ACM were vastly more likely to pull conference abstracts.

Springer Nature journals most often retracted papers due to problems with the results or conclusions, whilst Elsevier, Wiley and the IOS Press titles most often listed third party involvement as reasons for retractions. In most cases, third parties are likely to be paper mills, which are shady companies that churn out nonsensical, often plagiarised studies and sell authorship slots and citations.

The study’s sole author, Jonas Oppenlaender, a computer scientist at the University of Oulu, Finland, didn’t reply to questions by press time.

Jodi Schneider, an information scientist at the University of Wisconsin-Madison, who was not involved with the study, notes that publishers often produce opaque retraction notices, sometimes due to legal issues, which could affect the study’s findings.

As for the discrepancies between countries, Schneider recalls a policy in China that required Chinese hospital doctors to publish a certain number of research papers to be eligible for hospital promotions. But some have noted that China has since taken a number of steps, including rolling out a new policy on how to evaluate physicians in 2023, which could reduce retractions from China in future.

Malte Elson, a psychologist at the University of Bern, Switzerland, who is running a project that pays researchers for spotting errors in papers, says it may be difficult for China to reduce its share of retractions as it continues to increase its research output.

The new study’s numbers may not reflect an accurate picture of the scholarly literature, adds Schneider, who has studied how retracted work continues to be cited after being pulled. ‘Most people who study retraction think that there’s not enough retraction,’ she says. ‘The real story is we have to make it easier to retract.’

Elson agrees. ‘I don’t think these numbers indicate that things are good or particularly bad,’ he says. ‘But clearly there’s more fraud and more plagiarism happening than is indicated by these numbers.’

Schneider says it’s important that all retractions aren’t automatically presumed to be due to misconduct as that makes it harder for researchers to pull papers for honest mistakes. ‘There are competing tensions,’ she says.

Elson thinks biases also likely influence decisions around retractions. ‘A journal run by an American publisher with a US-dominated editorial board is likely going to be much quicker in retracting a Chinese paper than a paper from the United States.’

Jodi Schneider’s affiliation was updated on 1 April 2026

No comments yet