Improvements to cavity ring-down spectroscopy could also benefit quantum science

US-based scientists have cut out an electronic cause of error that has limited how accurately satellites can measure how much carbon dioxide is in the air from space. ‘We have identified, quantified and corrected for a largely overlooked and never-corrected measurement bias,’ says Joseph Hodges, research engineer at the US National Institute of Standards and Technology (NIST) in Gaithersburg.

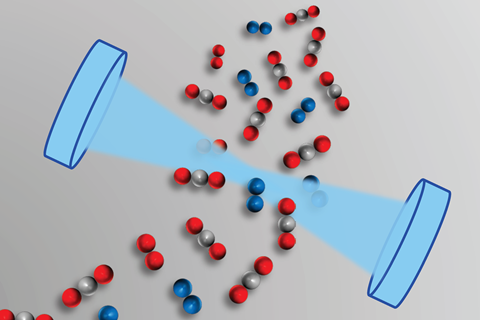

Tracking gases from space involves firing lasers into the atmosphere, and measuring tiny amounts of light that scatter back to the satellites. To do this, it’s important to know the cross-section, the probability that a given gas molecule will absorb light. Scientists can work that out by separately measuring spectroscopic line intensities, which indicate absorption strength, in labs on Earth and in aeroplanes. By exploring equipment issues, the NIST team has reduced uncertainties in line intensities of carbon dioxide, improving measurement accuracy 25-fold.

NIST’s study supports NASA’s Orbiting Carbon Observatory-2 (OCO-2) mission, which maps carbon dioxide release and uptake worldwide, pinpointing areas responsible. ‘The OCO-2 retrieval algorithms require highly accurate absorption cross-section data to convert wavelength-resolved measurements of reflected sunlight to surface pressure and carbon dioxide amount,’ Hodges says.

Rings a bell

Consequently, NIST is developing high-accuracy cavity ring-down spectroscopy (CRDS) methods for greenhouse gases, Hodges explains. The name refers to decaying reverberations, directly related to the way the ring of a bell slowly dies away. But in this case the reverberation that decays is light trapped in a cavity between two mirrors. ‘If you place a dampening object on the bell, the ringing doesn’t last as long,’ Hodges says, because the dampener absorbs energy. ‘For CRDS, the dampening material is simply the gas which absorbs the light reverberating within the cavity.’

By recording the energy the gas absorbs, scientists can measure its line intensities and work out its cross-section. However, until now there has been a 1-2% uncertainty in CRDS-obtained line intensities. To trace why, Hodges and his colleagues compared the uncertainties obtained with different instruments. In particular, the NIST researchers paired three CRDS instruments with five different digitisers, which records decay signals onto a computer.

In each case the researchers compared the decay they measured with a digitally-produced decay signal, using it to determine calibration coefficients that correct for electronic bias. Measuring carbon dioxide by CRDS using these coefficients then gave line intensity uncertainties of just 0.06%. ‘This 25-fold improvement will enable remote sensing of atmospheres with accuracy of less than one part per million of carbon dioxide, thus enabling improvement assignments of carbon sources and sinks on Earth,’ comments NIST chemist Adam Fleisher.

In orbit and beyond

Hodges adds that such bias corrections should help make measurements from CRDS instruments used in aircraft or on the ground more consistent. ‘These CRDS systems may not need to be calibrated against consumable gas standards nearly as frequently,’ he says.

‘Every experimentalist should pay heed to this advice,’ adds Gernot Friedrichs, from the University of Kiel in Germany, who was not involved in the work. ‘The paper can be understood as a plea to experimentalists to keep their detection electronics in mind as a significant source of uncertainty when reporting absolute line strength data.’ He adds that the issue of absolute accuracy of CRDS measurements is more important than the specific example of carbon dioxide.

Fleisher adds that there is definitely a broader relevance, and says the team is now set to link its digitiser analysis to new quantum standards.

References

A J Fleisher at al, Phys. Rev. Lett., 2019, DOI: 10.1103/PhysRevLett.123.043001

No comments yet