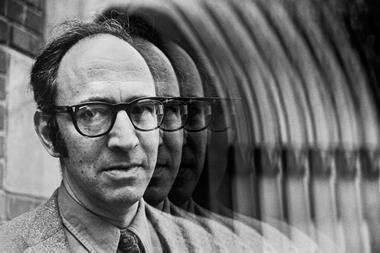

John Ioannidis explains why researchers should be curious about the differences between disciplines

Every scientific investigation, whatever the field, shares a common principle: the scientific method. Hypothesis, experiment, analysis and interpretation unite all disciplines. But the way the method is implemented and the standards of research in each discipline can vary substantially.

For example, disciplines vary a lot on the extent to which they pursue replication of their discoveries and the processes that they apply in this regard. Some disciplines feel that replication is mostly a waste of effort and resources, others will perform some replication studies, and others yet consider that no finding can be published and disseminated without meticulous and extensive replication by multiple teams.

These attitudes are pervasive and influence how scientists conduct themselves and their science: replication may be dis-incentivised in career promotion paths, or it may be a low-priority task left to students (for example, asking them to synthesise known substances) or it may be valued as a key aspect of the scientific package for senior mature scientists.

Data sharing is another example: in astrophysics, sharing raw data is common practice; in genomics it is somewhat more modestly applied; and until recently, those gathering clinical trials data have actively opposed sharing.

The research unit also comes in a diversity of forms. Large-scale collaboration and team science is a sine qua non in high energy physics and is a dominant paradigm in human genome epidemiology. But in many other basic physicochemical and biological sciences, the dominant paradigm remains that of a single principal investigator running their lab.

And while all scientific fields use peer review, the exact mode and timing of the process (pre-design, grant-level, study-level, post-publication) also varies a lot between different sciences.

What’s the difference?

Does it matter if we do things differently? In some cases, it matters a great deal. Non-replicated or refuted claims cause confusion to experts and to the general public. And different modes of peer review have different effects on the quality, accuracy and reproducibility of science.

At the very least, while optimal practices may vary from one field to another, it seems likely that scientists would benefit from being exposed to practices that have worked best in other fields. Investigating these differences and identifying effective research practices is therefore an interesting topic.

This ‘meta-research’, or research on research, is a relatively new term, but in essence, it has a history almost as old as scientific investigation itself. Its qualitative roots go back to the origins of epistemology and all scientists should have some interest in research methods and their optimisation.

For despite their differences, it seems disciplines also share a common problem: efficiency. A recent series of empirical evaluations suggests that 85% of biomedical research efforts are wasted.1 Science is a difficult, demanding endeavor, and it may be that 100% efficiency is impossible, but there is probably substantial room for enhancing our efficiency compared to current standards. By sharing with, and learning from each other, we can strengthen science as a whole.

Researching research

With over 15 million publishing scientists nowadays and millions of papers published every year, meta-research is a data-rich, fertile discipline. It also offers plenty of opportunities to conduct experiments, such as randomised studies where new research practices can be compared head-to-head against established ones in controlled settings.

The Meta-Research Innovation Center at Stanford (Metrics), launched in April 2014 with generous support by the Laura and John Arnold Foundation, aims to perform such rigorous empirical research on research to identify, probe and validate best practices.

We are also eager to identify the best way to disseminate these practices across diverse scientific disciplines. This will require contributions from and collaborations with the global scientific community and stakeholders who want to promote the best possible science. Metrics aims to become a facilitating hub for such activities and to create an international web of investigators and methodologists who can stand up for good science.

Science is the best conception of the human mind and there is nothing in the physical world that cannot or should not be subjected to the scientific method. This includes the tools and practices of science itself. I invite investigators to consider this prospect and share in the Metrics initiative.

John Ioannidis is co-director, Meta-Research Innovation Center at Stanford (Metrics), Stanford University, US

No comments yet