The current academic system doesn’t incentivise risk-taking

Risk is a public virtue and a private liability in science. Early-career researchers under pressure to secure a long-term contract or to draft top-quality manuscripts in a system that increasingly favours familiar science will usually decide to ‘play it safe’. This is not a personal failing, but a rational response to the incentives we have built. The question is how much gets lost under this structure.

It is a pattern familiar to many. Deliverables are key to funding panels and supervisors depend on papers for the next round of funding. These limitations mean that it is often more practical to pursue projects with traditional methods and familiar approaches, since they can be published in a shorter time frame with less chance of failure. More exploratory and interdisciplinary projects can impair a scientist’s reputation and employability if they fail to bear fruit.

I felt this tension directly when I expanded from synthetic chemistry to machine learning during my PhD. Every hour spent learning these new tools was an hour not spent generating ‘conventional’ experimental data. Later, as a postdoctoral researcher, I diversified into mechanochemistry, with different techniques and different ways things can fail. With this change came opportunity costs, initial slow experimentation and the risk that early experiments may not work.

I accepted these costs because I wanted to work beyond the boundaries of my original training, and because I believed that doing so would help me to become a better scientist. But that decision ran counter to the incentives academia tends to offer. Funders have limited resources and must allocate them responsibly. This places enormous pressure on supervisors to produce output. As they are evaluated on the continuity of that output, it seems that risk aversion is an emergent property.

But defaulting to familiar questions narrows the intellectual space of our field. Collectively, we risk becoming extremely efficient at asking only those questions to which we already know the answer.

Systems to spread risk

The response cannot be to ask researchers to compensate individually for a system that structurally suppresses risk. It may be naive or even unethical to suggest that people should simply ‘be braver’, but there are concrete mechanisms which we can implement, individually and collectively, to allow for calculated risk-taking.

One practical approach is to ring-fence time for more abstract and ambitious projects. It is also necessary to reframe failure. Not all unsuccessful experiments are wasted – they could be a valuable resource if we record them and publish their results whenever appropriate.

Conversations about these approaches need to be explicit; supervisors and research groups rarely communicate openly about risk-taking. Sustainable risk-taking also requires good back-ups: parallel approaches and tractable sub-problems can prevent an entire project that is not working as expected from becoming a single point of failure.

We need to create space for ideas that might fail

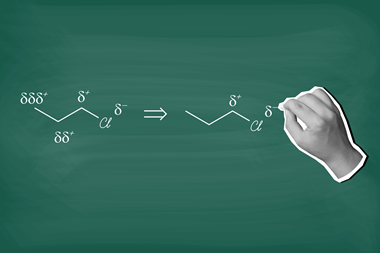

I saw this most clearly when I ring-fenced part of my time during my PhD to build computational tools to support high-throughput experimentation. For a long time, this produced little in the way of conventional outputs: no new reactions, no manuscripts in preparation. But once the tools were in place, they allowed us to interrogate chemical space more efficiently and systematically and to prioritise experiments with a confidence that cannot be provided by intuition alone. Ultimately, they accelerated progress in ways that would have otherwise been impossible.

Playing it safe is a rational response to modern research culture’s institutional incentives. But rationality can still lead us to an inefficient equilibrium. If we want chemistry to do more than optimise the familiar, we need to create space for ideas that might fail. That means choosing, consciously and collectively, to occasionally roll the dice.

No comments yet