Large language models can be powerful tools for chemistry if we acknowledge their limits

Working in a technology role within pharma chemistry, it is currently impossible to evade conversations about the large language model (LLM) ChatGPT. This chatbot quickly became the fastest-growing consumer application ever, notable for making conversation with (loosely defined) artificial intelligence accessible to anybody. I signed up with my existing Google Account and can chat with the bot conversationally by typing plain English into a website. ChatGPT is also very powerful. It can summarise long texts or dig out specific information, give advice on tone in emails, edit or write code, make suggestions for projects, or do pretty much anything you ask it to – as long as you accept that the answer might be wrong. That combination of power and accessibility has made the bot the latest technology hype.

Tech buffs will be familiar with the Gartner hype cycle, a measure of excitability about any new development. Typically, once a breakthrough becomes popular, there soon follows a peak of hype and inflated expectations, often bolstered by marketers trying to make money. There’s a gold rush feeling, where everyone tries to get on board because they don’t want to be left behind. But when people take on new tools for the wrong reasons, or a use case naturally doesn’t work out, the peak gives way to a trough of disillusionment. Users are disappointed it hasn’t changed the world and made them a cup of tea at the same time, and companies relying on the tech may fail. Lastly, if the technology is actually worthwhile it eventually reaches a business-as-usual plateau with neither uncalled-for disillusionment nor overexcitement.

The Gartner hype cycle is subjective, hits different users at different times, and is also not actually a cycle. However, once a tool has already hit a hype peak, it’s not a bad observation of what, psychologically, happens next.

For academic scientists, who might benefit from the initial buzz around a big paper but don’t necessarily need the work to turn into long-term value, generating and using hype can make careers. When big tech does it, it can be worth billions. For both the academics and the tech companies, many probably feel their objective success outweighs the cost of complaints of overselling, even if the practice is ethically iffy.

What the hype obscures is that LLMs actually go far beyond chatbots

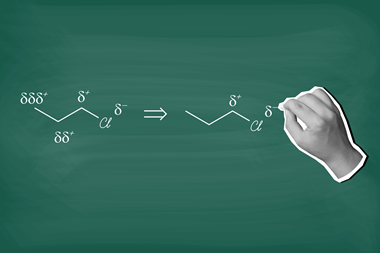

Saying that a technology or paper is hyped up suggests that some part of its supposed benefits are more fluff than substance (at best), or actively wrong or harmful (at worst). And ChatGPT certainly makes mistakes. I weirdly enjoy exploring the limits of its capabilities. For example, it does have some chemical knowledge, but lacks much beyond early undergraduate level chemical transformations – which isn’t surprising, given that most advanced insights are locked away behind subscription paywalls. Sometimes it can be correct only by lucky chance. When I asked it to suggest reaction conditions for a hypothetical Suzuki coupling it did really well, but when I expanded to other catalysed reactions I realised it essentially suggested similar sets of conditions for most transformations. Sometimes it can be wrong entirely: when I asked ChatGPT to show me some papers I had written, I was pleasantly surprised to learn of my Nature paper on electroluminescent materials, a field about which I know essentially nothing.

I also had a long argument with the bot over whether a screw was chiral: a simple request marred by the fact that it associated chirality only with molecules. Since it feels so much like text-chatting with a human, I essentially wasted time teaching a tool that can’t be taught, its anthropomorphised stubbornness feeling incredibly frustrating yet its ability to argue a point providing the compulsion to continue.

What the hype obscures is that LLMs actually go far beyond chatbots. When accessed programmatically, they can be fed complex knowledge graphs and databases with links between many types of entity. Or, they can be purpose-built and pretuned with advanced domain knowledge, ready to pick out connections difficult or too time-consuming for a human to deconvolute. Pretuning can also enormously reduce the risk of hallucinations like my non-existent Nature paper. It is possible to tell a model to limit its responses to those from a particular corpus (for example, a company’s drug discovery knowledge base) and to provide checkable sources. Although public ChatGPT is powerful too, bespoke models could be a game changer for many uses of chemical data.

I’m really looking forwards to finding out where LLMs will take pharma and chemistry. They are becoming more impressive month on month, and with safeguards and some common sense, their power to contextualise information and rapidly produce outputs is impressive. As for the public version, as soon as I gained access to the more advanced GPT-4, the first question I asked was whether a screw was chiral. Without even the slightest hesitation, it correctly answered yes.

No comments yet