Chemists must embrace open data to allow us to collectively get the best out of the masses of new knowledge we unearth, reports Clare Sansom

The ‘data mountain’ in chemistry is growing ever taller. It is difficult to work out exactly how much chemical data is out there, but Ewan Birney, associate director of the European Bioinformatics Institute (EBI) near Cambridge, UK, has estimated that the speed of data generation in the life sciences is ‘only an order of magnitude less than that from the Large Hadron Collider’. That is a lot of terabytes; much of it is chemical data, and more is closely linked to chemistry. It is enormously important – but also enormously challenging – for all chemists to be able to access and make full use of this resource.

Historically, the chemical community has lagged behind molecular biologists in developing both the policies and the software to enable its data to be widely used. This is not just because molecular biology was arguably ahead of chemistry in becoming a ‘big data’ discipline, but through deliberate decisions by leading biologists. The agreement in 1996 that all data obtained in the Human Genome Project should be made freely accessible within 24 hours is rightly regarded as a model for open access in all scientific disciplines.

Chemistry, however, is now catching up. Anne Hersey, coordinator of the open access ChEMBL database group at the EBI, published an editorial in the journal Future Medicinal Chemistry in 2012 arguing that pharmaceutical chemists should learn from the biological community and make as much of their data as possible widely accessible, preferably in machine-readable formats. Drug discovery, she suggested, is becoming a much more diverse process, with academic and charitable labs (with no big budgets to spend on data access) beginning to play as important a role as their commercial counterparts. Three years later, evidence that this change has started in earnest is now apparent.

A global community

One organisation helping with the move towards open access databases is the Research Data Alliance (RDA). The RDA – set up with funding from the European Commission, the US National Science Foundation and the Australian government – supports and promotes open data and data sharing in all its forms across all scientific disciplines. It is free for anyone to join, and volunteer experts come together in working and interest groups to develop the technical and social infrastructure that is needed for open data sharing.

The more chemists who join the RDA, the more their voices will be heard in the open data community

UK bioinformaticians Andrew Harrison from the University of Essex and Hugh Shanahan from Royal Holloway, University of London, who have worked together in several of these groups, explained how aggregating data from different sources can add value. ‘High throughput data generation, such as the simultaneous measurement of tens of thousands of gene expression levels, has a reproducibility problem,’ Harrison says. ‘If you do the same analysis several times the results will often be different. These experiments only become reproducible, and thus really useful, when data from many studies is combined; we can now offer ‘science as a service’ rather than expecting one lab to do everything.’ Scientists in many disciplines may learn more by looking for patterns in others’ data than by generating their own. Assessors may not like it, but the most useful ‘unit of research’ is arguably no longer the research paper: it is the dataset.

This is not a new concept. In fact, it harks back to the way some important discoveries were made centuries ago. In chemistry, for example, Dmitri Mendeleev in the mid-19th century put together the first widely accepted version of the periodic table by observing patterns in data on the then-known elements that had been collected over the preceding centuries. He even used that to deduce properties for elements then unknown.

Currently, relatively few chemists are active in the RDA, but there is valuable work for many more to do, and this should directly benefit the discipline as a whole. ‘We want everyone to join, the more chemists who join the RDA, the more their voices will be heard in the open data community,’ says Harrison.

Input options

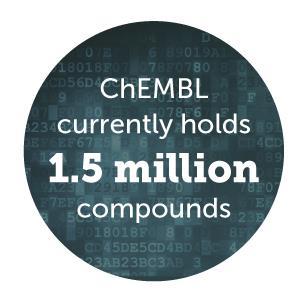

To date, medicinal chemists are probably the most active of all the chemistry sub-disciplines in terms of depositing, using and sharing open data. ChEMBL is one of the most widely used open chemical databases, and it focuses entirely on the structures and properties of pharmacologically active molecules. This database currently holds about 1.5 million such compounds, with 13.5 million related bioactivity data points. The structure of each compound is stored in several human- and computer-readable formats and is linked to both calculated physicochemical properties and biological assay data. Much of the data in ChEMBL is curated manually. The work of extracting data from tables in the published literature and entering it into the database has been outsourced, and the resulting datasets are very accurate. But not all of this data is as precise as it could be. ‘We provide mainly 2D structures, extracting 3D information only for those compounds that are present as ligands in the Protein Data Bank. This data is not as high in precision as 3D data from small-molecule crystal structures. There is, however, plenty of software available for converting 2D chemical structures into 3D,’ explains Hersey.

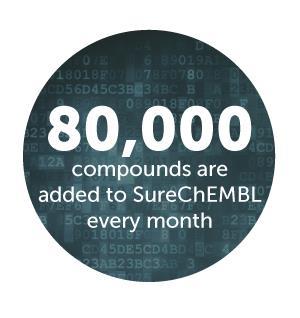

Hersey describes another open access EBI chemical database, SureChEMBL, as ‘a totally different beast’. The data in this database is extracted automatically from the full text in patents published in the US and European patent offices, the World Intellectual Property Organization, and the English-language abstracts of Japanese patents. It is growing at the rate of about 80,000 compounds a month and already holds chemical data on about 16 million compounds from over 13 million annotated patents. ‘Every chemical entity recognised as such in a patent goes into this database, so we have to develop smart ways to filter it,’ says Hersey. ‘Some compounds, like biologically active lead molecules, are intrinsically more interesting than, for instance, commonly used reagents.’

The software used to generate SureChEMBL entries automatically converts images of 2D structures as well as chemical names to machine-readable structures. The quality of the conversion is of course dependent on the quality of the original patent image. Both Inchi records (International Chemical Identifiers) and the Smiles (simplified molecular-input line-entry system) strings are generated. An Inchi, like a Smiles string, is a linear text-based record that defines a chemical entity, but it has different layers: the first is the molecular formula, then there is the connectivity and stereochemistry and, where appropriate, layers for isotopes and charge. ‘There are advantages and disadvantages to both data types. An Inchi is superior to Smiles as a general chemical identifier because it is the same however it is generated, whereas different programs may generate different Smiles strings from the same structure: however, only Smiles can be used for substructure searching,’ explains Hersey.

A web of data

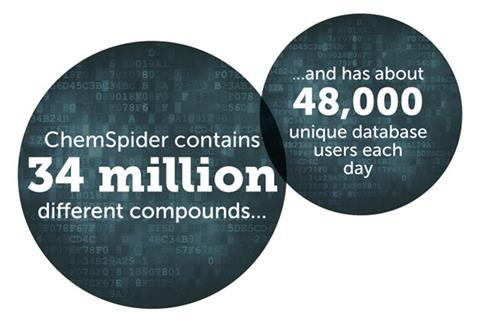

ChemSpider, another freely available and widely used database, was set up by Antony Williams in the US to automatically trawl the internet for chemical data and integrate it into a database. It was initially almost a hobby project, but since it was acquired by the Royal Society of Chemistry in 2009 it has now grown to 34 million compounds and covers a larger and more representative part of ‘chemical space’ than the pharmacologically-focused ChEMBL.

If all chemistry PhD candidates submitted their data … this would make their work more visible and valuable when released

Each new ChemSpider record is automatically checked for serious errors such as impossible structures; more minor problems, such as missing stereochemistry, are flagged with warnings. If users notice errors that have been missed by automatic checks – as they not infrequently do – they can flag them for correction. Richard Kidd, publisher for data and databases at the RSC, describes the database curation model as ‘a bit of a mix between Google and Wikipedia’. A small in-house team is helped with manual corrections by a group of volunteers, including experts in their fields. ChemSpider currently has about 48,000 unique database users each day from pure and applied chemistry and related disciplines, says Kidd. ‘Companies see it as a valuable addition to their internal resources, and it is widely used in teaching, particularly but not only in higher education.’

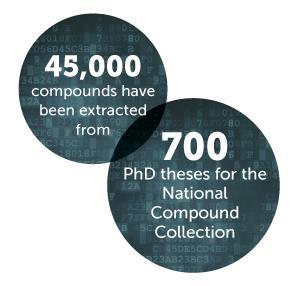

Despite ChemSpider’s huge size and rapid expansion, its developers are still investigating new ways to support wider accessibility to published and unpublished data. A pilot project led from the University of Bristol extracted about 45,000 compound records from 700 PhD theses, aimed at proving the case for a National Compound Collection, and entered the data into ChemSpider (see Chemistry World, April 2015, p10). ‘If all chemistry PhD candidates submitted their data to registries when they submit their theses,’ says Kidd, ‘this would make their work more visible and valuable when released – but we need to convince them of its value of this additional effort.’

Each molecule in ChemSpider is unambiguously identified using an Inchi. Despite the best efforts of the International Union of Pure and Applied Chemistry, definitions of other types of chemical entity are more ambiguous and misunderstandings can arise, particularly in international collaborations. The RSC has produced and promoted the use of controlled vocabularies or ontologies for chemical concepts. The inspiration for the first RSC ontology, the Reaction Ontology (RXNO), came from hearing about a gene ontology for biochemical processes being developed at the EBI. ‘We realised that the chemical community had similar problems when discussing organic reactions’, says Colin Batchelor, data scientist at the RSC. ‘It is a bit like chess – every chemist needs to recognise precisely what is meant by the term Grignard reaction, just as every chess player can recognise a Ruy Lopez opening.’ The software company NextMove has developed a tool to take reactions directly out of electronic lab notebooks and classify them using RXNO. Medicinal chemists at pharmaceutical giant AstraZeneca have found this useful to find if methods they are considering have already been done before in house.

Text mining

Extracting chemical (or any other) data from the literature and converting it into database entries requires a set of methods that are grouped together under the term ‘text mining’. This is now a recognised sub-discipline within informatics, and it has many applications in chemistry, the life sciences and medicine. The National Centre for Text Mining in Manchester (Nactem), UK, has close links with the University of Manchester’s Centre for Integrative Systems Biology. Researchers there have taken part in a series of challenges for evaluating methods of extracting information from biomedical text known as Biocreative (Critical assessment of information extraction systems in biology). BioCreative 4, in 2013, included several tasks in the area of chemical entity recognition. ‘Our team took top place in several tasks, including a specific task to curate a toxicogenomics database,’ says Nactem’s director, Sophia Ananiadou. ‘We designed a modular platform, Argo, which can easily incorporate the text mining tools that are most appropriate for a particular task, including commercial ones if these are available.’

Bioinformatician Soren Brunak from the Technical University of Denmark, who is better known for protein sequence analysis algorithms, has set up a project to mine Danish medical records for data, following the same patients over many years to study disease risk. He quotes a few results of this work: ‘We have found that the short-term prognosis for patients in an intensive care unit, or their experiences of adverse drug reactions, may depend as much on their medical history over decades as on genetic differences.’

Brunak’s and Ananiadou’s groups are dependent on free access to their raw material, whether journal articles or medical records, in the public domain. As a result, groups like these tend to be strong advocates for open data. And Denmark and the UK are currently among the most open countries in the world for this. The complete medical records of all Danes are kept for decades, and this data is available to all researchers. In the UK, as Ananiadou says, ‘Recent changes to UK copyright law mean that, as well as being able to text mine open access journals as before, researchers here can now text mine subscription journals for non-commercial purposes, provided they have lawful access for example via their university library’s subscriptions. Thus the situation has improved a lot, and is contributing to a re-assessment of European copyright laws.’

Aiding drug development

Matthew Todd, associate professor of organic chemistry at the University of Sydney, Australia, is taking the concept of open data further still. His group uses the concept of the open lab notebook, in which everything that is done – every synthesis, whether it succeeds or fails – is released into the public domain. ‘We call this open source research: anyone can access our records at any time, and anyone with access to a lab is able to become an equal partner in our projects, helping to direct the research before it’s done,’ he says. His group is focusing on the development of anti-malarial drugs through the Open Source Malaria Consortium and keeps a complete and easily searchable record of every molecule synthesised within that project, including intermediates.

Todd’s idea of ‘drug discovery without secrecy’ is the extreme example of the trend that has seen early stage drug discovery moving from big pharma into smaller companies and, increasingly, into the public and charitable sectors. Harnessing ‘the wisdom of the crowd’, from senior scientist volunteers to high school students, can pay great dividends but will not be enough to take a molecule into the clinic. ‘A full clinical trial of an anti-malarial drug costs about $30 million (£19 million): this is not a lot compared to the cost of a similar trial in oncology, but it is a barrier for even the best funded government bodies and philanthropic organisations,’ says Todd.

And some at least of the big pharma companies have made significant investments in drug development for tropical diseases. GlaxoSmithKline (GSK) has built a research campus at Tres Cantos near Madrid in Spain that is dedicated to the development of novel drugs for tuberculosis, malaria and diseases caused by infection by a group of protozoa termed kinetoplasts. This campus is home to the Open Lab Foundation, which hosts independent researchers from across the world working on these diseases. Its work is kept as open as possible; their academic collaborators include open data advocates like Todd, and all their results are rapidly released into the public domain. ‘We have had some notable successes already as a consequence of our open innovation approach, including the identification within the Orchid EU-funded consortium of a beta-lactam antibiotic that can be re-purposed for tuberculosis,’ says Lluis Ballell, head of the Open Lab at GSK Tres Cantos.

Open data resources are widely used also in the small biotech sector. John Overington, until recently head of cheminformatics at EBI, has now joined a London-based biotech, Stratified Medical, as head of bioinformatics. This company is using an artificial intelligence approach known as ‘deep learning’ to accelerate drug discovery. Its huge datasets are built from public databases such as ChEMBL and the Generated Database of Molecules. The latter contains all possible chemical structures that include up to 17 of the ‘heavy atoms’ that are most commonly found in drug molecules: carbon, nitrogen, oxygen and fluorine. ‘Our datasets are so large that the database software we had been using kept falling over,’ says Overington. ‘The new solutions that we are developing are based largely on open source software.’

Many chemists, then, are heeding Hersey’s advice to learn from their counterparts in the life sciences. They may have come late to the open data party, but they are now not only working the data mine but sharing data more widely and efficiently, and using it in smart and useful ways.

No comments yet