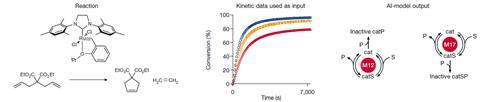

A new artificial intelligence (AI) tool can classify chemical reaction mechanisms using concentration data to make predictions that are 99.6% accurate with realistically noisy data. Igor Larrosa and Jordi Bures from the University of Manchester have made the model freely available to help progress ‘fully automated organic reaction discovery and development’.

‘There is a lot more information within kinetic data than chemists have been able to extract traditionally,’ comments Larrosa. The deep learning model ‘does not just match but surpasses what chemist experts on kinetics would be able to do with previous tools’, he claims.

Larrosa adds that chemistry is at a unique turning point for AI tools. As such, the Manchester chemists sought to design a model with the ideal capabilities for reaction classification. Bures and Larrosa combined two different neural networks. First, a long short-term memory neural network tracks concentration changes over time. Second, a fully connected neural network processes what comes out of that first network.

The final model contains 576,000 trainable parameters. The parameters describe ‘mathematical operations that are carried out on the kinetic profile data’, Larrosa explains. These operations then produce probabilities for which mechanism the data arises from. ‘For comparison, AlphaFold uses 21 million parameters and GPT3 uses 175 billion parameters,’ he adds.

Catalyst insights

Bures and Larrosa trained the model with 5 million simulated kinetic samples, labelled with which one of the 20 common catalytic reaction mechanisms the sample relates to. Once the model has learned to recognise the characteristics of the kinetic data associated with each reaction mechanism it ‘applies those rules to new input kinetic data to classify it’, says Bures. The first of the 20 is the simplest catalytic mechanism, described by the Michaelis–Menten model. Bures and Larrosa group the rest as mechanisms involving bicatalytic steps, those with catalyst activation steps and those with catalyst deactivation steps, the latter being the largest group.

Simulated data is needed for high classification performance, Bures adds, because experimental data is inevitably noisy and hard to interpret. ‘Experimental data and corresponding chemist’s conclusions should not be used for training because the resulting model would be, at best, as accurate as an average chemist, and more likely less accurate,’ he says.

To test the trained model, Bures and Larrosa used more simulated data, which only caused 38 classification mistakes in 100,000 samples. To simulate real experiments more closely, the chemists added noise to the data. That reduced accuracy to 99.6% with realistic levels of noise and 83% with what Larrosa calls ‘the absurd extreme of noisy data’.

The chemists also applied the model to data from previously published experiments. ‘While the correct answer for these cannot be known, the model proposed mechanisms that are chemically sound,’ says Larrosa. The results also provided new insights into how catalysts for reactions including ring-closing olefin metathesis and cycloadditions decompose. ‘Understanding catalyst decomposition pathways is hugely important to be able to make reproducible processes,’ Larrosa underlines.

Marwin Segler from Microsoft Research AI4Science calls the work ‘a fantastic demonstration of how machine learning can help creative scientists to unravel nature and solve hard chemical problems’. ‘We need better tools like this to discover novel reactions to make new drugs and materials and make chemistry greener,’ he says. ‘It also highlights how powerful simulations can be to train AI algorithms, and we can expect to see more of that.’

References

J Bures and I Larrosa, Nature, 2023, DOI: 10.1038/s41586-022-05639-4

No comments yet