In 1988 Nature’s editor John Maddox laid down the gauntlet to computational chemists when he said that ‘One of the continuing scandals in the physical sciences is that it remains impossible to predict the structure of even the simplest crystalline solids from a knowledge of their composition.’ In the ensuing decades, great strides have been taken to crack that problem.1 Now, Matthew Rosseinsky, Paul Spirakis and colleagues at the University of Liverpool, UK, claim to be able to reliably find the lowest-energy or ground-state structure of a range of crystalline compounds given only their stoichiometric formulae.2

Being able to predict crystal structures would be a tremendous tool for discovering useful new materials. Although many methods now exist for such prediction, one of the big challenges is confidence that the estimated energies of predicted structures have identified the global optimum. The algorithm devised by the Liverpool team now offers ‘optimality guarantees’ for the structures it identifies.

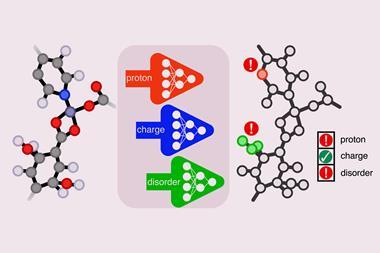

The method uses a computational technique called integer programming, widely used in areas such as logistics, healthcare and finance. This entails mapping the ‘space’ of the quantity being optimised – here the energy as a function of atomic configuration – by coarse-graining it into a series of discrete coordinates. It is rather like plotting the continuous topography of a landscape by interpolating from height measurements made at many locations. For each discrete atomic configuration, the energy is calculated by summing up interactions between pairs of atoms or ions based on simple pair potentials that assume long-ranged electrostatic interactions and short-range repulsion.

A big problem

In general, even a relatively simple compound containing just a few atoms in the unit cell will have an immense number of permutations of atoms, if their possible locations are closely spaced. To make the calculation tractable, Rosseinsky and colleagues use another computational trick called branch-and-cut optimisation, which enables them to quickly eliminate much of the potential search space because an optimal solution will evidently not be found there.

‘Branch-and-cut is a clever and systematic way to crunch through the whole space of coarse-grained structures,’ says co-author Vladimir Gusev. ‘It splits the structure space into subclasses, and for every such subclass there will be a guaranteed bound on how low in energy the structures can be – a worthiness score, if you like.’ If the class is large, that bound might be rather poorly constrained, and so the class can be split further – hence the branching. ‘You use the worthiness score to guide and prioritise exploration and to exclude some classes completely from consideration,’ says Gusev.

Once the best or lowest-energy structure has been identified in this discretised space, the algorithm performs a ‘local’ optimisation in which the atomic positions can adjust or relax in continuous space to find their equilibrium values.

‘Integer programming allows you to exhaustively screen coarse-grained structures in a reasonable time,’ says Rosseinsky. ‘Since it provides exact solutions every time, we can use them to reason about the continuous space in which the actual structures exist, and search for the ground-state structures.’ The method can also be used with quantum optimisation methods like quantum annealing.

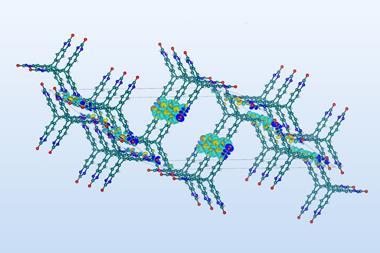

In this way, Rosseinsky and colleagues calculated the crystal structures for several inorganic compounds, including rather complex mineral phases such as spinel (MgAl2O4) and garnet (Ca3Al2Si3O12). The latter has 62 unique atomic positions in the lattice, and the algorithm could find this ground-state structure in one second on a desktop computer. The spinel structure was harder to find and took just over an hour.

The key to developing the method, says Rosseinsky, was ‘investing the time to work across the physical and computer sciences, to develop a shared understanding to address the problem. People are not often trained in both disciplines, and they approach problems differently.’

Drawbacks could constrain use

While this new approach adds to the available methods for finding globally optimal crystal structures, it has limitations, says computational chemist Artem Oganov of the Skolkovo Institute of Science and Technology in Moscow. Like all methods, it faces an exponential increase in the number of possible configurations as the number of atoms increases. Oganov points out that the structures solved so far are all relatively simple cubic crystals, and anticipates problems with non-cubic structures, as well as ones with large open spaces such as zeolites and molecular crystals. He adds that, as the branch-and-cut approach is just a heuristic method, the ‘optimality guarantee’ is not in fact rigorous.

Materials scientist Chris Pickard of the University of Cambridge also worries that so far the range of structures used to validate the method is small, and that it might not work for molecular crystals. In that case, ‘the shapes of the unit cells are rarely cubic or highly symmetric, and I think a very dense grid would be needed to capture both the molecules and the spaces between them’.

Materials scientist Gerbrand Ceder of the University of California at Berkeley says that ‘although the paper is an interesting new approach, it is not clear how practical it is’. He says that the use of simplified pair potentials may not be the most accurate way to calculate energies, and agrees there is no guarantee that the solutions found for the fixed lattice remain global optima once the atoms are allowed to relax.

All the same, Pickard calls the work ‘a significant and thought-provoking advance’. Although in its present state he thinks it ‘is not likely to replace the algorithms we are using day-to-day’, he adds that ‘it will be very interesting to see how it develops, and what other ideas it spawns’.

References

1 CRA Catlow, IUCrJ, 2023, 10, 143 (DOI: 10.1107/S2052252523001835)

2 VV Gusev et al, Nature, 2023, 619, 68 (DOI: 10.1038/s41586-023-06071-y)

No comments yet