Toxicologists must embrace better ways of accounting for low dose effects, argue Annamaria Colacci and Nicole Kleinstreuer

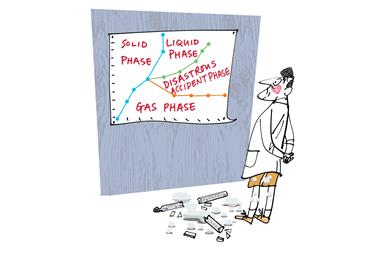

Toxicological risk assessment is an area of scientific enquiry with deep and far reaching implications for society. Traditionally, it has relied heavily on the concept of the monotonic dose-response relationship, embodied by Paracelsus’s statement ‘the individual response of an organism to a chemical increases proportionally with the dose’. Now it is becoming increasingly clear that we need a more nuanced approach for low doses of chemical mixtures.

Regulators typically use monotonic dose-response curves obtained from experimental studies in animals to distinguish between normal biology, adaptive responses and adverse effects. In such studies, the animals are often exposed to doses that far exceed anything humans are likely to encounter in the real world. For the purposes of regulation, the onset of adverse effects is key to determining the level of exposure that presents an unreasonable risk for humans and ecosystems.

For many substances, it is possible to use these curves to identify a threshold below which the risk of such effects may be considered negligible. However, usually there will be little or no experimental data in this lower dose range, nor will genetic differences and intermediate functional changes have been measured. Rather, the threshold will have been determined by extrapolating higher dose data, with an implied assumption that the monotonic dose-response curve holds true at low doses.

The problem of mixtures

There is now considerable debate over whether this is reasonable, especially in the context of the chemical mixtures frequently found in the environment. Through workshops and the meetings of scientific panels, the European commission has reached a consensus on the need to consider potential adverse effects from low doses relevant to humans and ecosystems. Meanwhile, the US Environmental Protection Agency (EPA) has partnered with other federal agencies and the National Research Council (NRC) to address how such effects should be included in risk assessment. The question is whether traditional testing approaches are sufficient. If not, should they be integrated with newer approaches, or are more extreme changes in chemical testing and toxicological modelling needed?

The results from the Halifax Project, run by an international consortium of 174 scientists, suggest some chemicals considered non-carcinogenic in isolation may increase cancer risk when present in the environment in certain mixtures.1 This raises the question of whether such chemicals are promoting tumours through molecular mechanisms that the current suite of standard tests is unable to identify. Furthermore, will a mixture of chemicals affecting different cancer hallmark pathways lead to a synergistic effect on carcinogenesis that individual chemical tests cannot capture? We know that chemicals sharing the same mechanism may act together to induce additive effects, as captured in a recent cumulative risk assessment on phthalates.2 In addition, mammalian studies have demonstrated that synergistic effects can be induced by chemicals co-present in a mixture.3

Alternative approaches

The solution in certain circumstances may be the concept of the threshold of toxicological concern (TTC), used by the US Food and Drug Administration (FDA) for regulating food packaging components. It is a structure-based approach, which provides a conservative exposure threshold, driven by the toxicity of the known substances. Some in the risk assessment community have proposed variants of the TTC approach to overcome specific chemical mixture assessment challenges, including those relating to unknown molecules and synergistic effects, and to set exposure levels for pesticides, industrial chemicals, pharmaceuticals, aerosols and personal care products.

In general, however, the TTC approach suffers from a limited domain of applicability, leading others to put forward more informed, mechanistically driven and probabilistic assessments of chemical impacts on biological pathways. Novel technologies and high-throughput screening (HTS) assays, such as those used in the EPA ToxCast and federal Tox21 research programmes, could allow mechanistic identification of adverse effects at doses much lower than those used in standard animal experiments. Meanwhile, programmes such as ExpoCast integrate monitoring and modelling to characterise bioactivity in the context of exposure potential. Taking things in a slightly different direction, in December 2014 the EPA scientific advisory panel evaluated the concept of an integrated bioactivity exposure ranking (IBER), which would extend point estimates of bioactivity, toxicokinetics and exposure for chemicals to distribution ranges based on uncertainty and population variability.

The risk assessment community needs to embrace mechanism-based approaches that focus on biological pathways to overcome the limitations of how we currently discriminate between adaptive responses and adverse effects, as well as characterise environmental chemical contributions. These, used in conjunction with probabilistic frameworks to capture uncertainty and variability, should enable us to more accurately assess the risks of real exposure scenarios.

Annamaria Colacci is a toxicologist at the Regional Environmental Protection Agency for Emilia Romagna in Bologna, Italy.

Nicole Kleinstreuer is a computational toxicologist at Integrated Laboratory Systems, US.

References

1 W H Goodson et al, Carcinogenesis, 2015, 36 Suppl 1, S254–96

2 US Consumer Product Safety Commission, Chronic hazard advisory panel on phthalates and phthalate alternatives, 2014

3 A Boobis et al, Crit. Rev. Toxicol., 2011, 41, 369 (DOI: 10.3109/10408444.2010.543655)

No comments yet