Mass spectrometry can be used for more than just small molecules, meaning it is a vital tool in drug discovery and hospitals, as Clare Sansom discovers

The mass spectrometer is almost a century old. In 1913, the UK physicist J J Thomson – best known for discovering the electron – passed a beam of ionised neon through electric and magnetic fields and measured its deflection with a photographic plate. He found that the beam divided into two, producing two spots. He concluded, correctly, that these were composed of neon atoms with different masses: the isotopes 20Ne and 22Ne. His student Francis Aston, later also a Nobel laureate, built the first functional machine to separate atomic species based on mass and charge – the first mass spectrometer – in Leeds in 1919. The basic principles of that design are still used today, and mass spectrometry (MS) is still the most accurate and precise laboratory method for determining molecular masses.

The first practical uses of mass spectrometry were to separate atoms into isotopes, as Thomson had done with neon. A type of mass spectrometer was used in the Manhattan Project to separate isotopes of uranium and produce enriched uranium for ‘Little Boy’, the bomb that devastated Hiroshima. Molecules, particularly large ones, present more of a challenge: how can the molecule be ionised without breaking some of its bonds? For decades this limited MS to determining the masses of ‘small’ (and medium-sized) molecules.

It was the invention of the ‘soft’ ionisation techniques, which allow large molecules to be ionised and remain intact, in the 1980s that led to the technique becoming a mainstay for mass determination of entities as large as a virus capsid or as complex as a human ribosome. Many soft ionisation techniques have been developed in the decades since, but matrix-assisted laser desorption ionisation (Maldi) and electrospray ionisation (ESI) are the most widely used. And analytical mass spectrometry has an enormous range of practical applications, all much more benign than that first military use in the 1940s: among others in geochemistry, environmental and forensic science, in several parts of the drug discovery pipeline and in clinical medicine.

Matrix methods

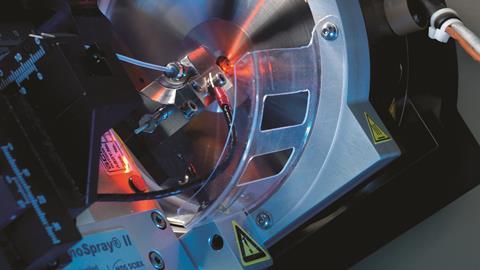

Matrix-assisted laser desorption ionisation was invented by Franz Hillenkamp and Michael Karas in Germany in the mid-1980s. The Japanese engineer Koichi Tanaka described a similar technique a little earlier and won a share in the 2002 Nobel Prize for Chemistry for this work while only in his early 40s, but it is Hillenkamp and Karas’ technique that has become standard. The operation of Maldi apparatus has changed out of recognition in the decades since, but the basic principles are completely unchanged. They involve embedding the sample to be tested in a matrix that absorbs electromagnetic radiation in a particular wavelength range, most often ultra-violet. The matrix and its embedded analyte are illuminated by laser light with a wavelength within that range, and much of the energy is absorbed by the matrix leading to a volume disintegration process (i.e. ablation rather than classical desorption). As a result, the analyte acquires only a little internal energy, keeping it intact. Ionisation is far more complicated with several theories being postulated depending on the given desorption/ionisation parameters. The resulting ions are sputtered into a ‘mass analyser’ that separates them according to their mass-to-charge ratio: frequently through accelerating them in a known electric field and measuring the time of flight to a detector.

Even these Maldi techniques are not soft enough for many biological applications, however. Protein complexes fall apart, and glycans, phosphates and other post-translational modifications ‘fall off’ their amino acids when irradiated by the 337nm ultra-violet laser light that is most often used in biomedical research. Rainer Cramer, now a professor of bioanalytical sciences at Reading University in the UK, was involved in developing Maldi techniques using lower-energy lasers as a PhD student in Hillenkamp’s lab in Munster and Vanderbilt University in Tennessee, US. He remembers another link between mass spectrometry and the US defence capability. ‘When Congress shut down the controversial “Star Wars” strategic defence initiative in the early 1990s, a lot of its kit, including infra-red free-electron lasers, was made available for biomedical research. We used one of these for our Maldi apparatus.’ Infra-red Maldi is soft enough for phosphorylated and glycosylated proteins to stay intact in the ioniser, but these lasers are still much more expensive and harder to use than the common ultra-violet ones.

The most precise forms of quantitative mass spectrometry will involve several mass determination steps, separated by some form of chemical fragmentation of the molecular species. When the sample is a protein, the initial fragmentation step is simply proteolysis. Cramer’s group is developing such protocols for the analysis of proteins in blood and other body fluids, principally for detecting and diagnosing cancer. The term ‘bottom-up proteomics’ is applied to any method in which proteins in a sample are broken down to peptides before any masses are determined; if this is applied to the crude protein extracted from cells, it can be termed ‘shotgun proteomics’ by analogy with shotgun DNA sequencing.

Dire need

Ovarian cancer is a disease for which accurate tools for early detection and diagnosis are still badly needed. It is known as a silent killer because early symptoms are vague and diffuse and, consequently, it is often only detected at a late stage when treatment rarely succeeds. About three-quarters of women with the most common form, epithelial ovarian cancer, will have raised levels of a protein known as CA125 in their blood. ‘CA125 is the best biomarker we have for ovarian cancer, but it is still not good enough,’ says Cramer. ‘Mass spectrometry can add other biomarkers or biomarker profiles to CA125 enzyme-linked immunosorbent assays and other immunological methods, but this only brings a slight improvement in detection, because the disease is relatively rare and unless specificity is close to 100%, false positives will be quite common.’

CA125 is the best biomarker we have for ovarian cancer, but it is still not good enough

False positive cancer diagnoses can distress patients, waste valuable health resources and delay the diagnosis of commoner conditions that may be more benign but still painful. Ideally, we need more accurate and precise simultaneous measurements of many more biomarkers to build up a proteomic ‘signal’ of ovarian cancer, and MS is the ideal technique to use for this. Cramer and his co-workers are developing protocols for the rapid detection of multiple biomarkers of disease in body fluids; these also have veterinary applications that include the diagnosis of mastitis from bovine milk. ‘We are still investigating how and where MS can add value to the most widely used techniques for clinical diagnosis, to improve precision, sensitivity and specificity,’ says Cramer.

Very few applications of protein mass spectrometry have entered the clinic, at least for routine applications. With smaller molecules, however, the situation is very different. The smaller and cheaper mass spectrometers that are used to obtain precise measurements of small and medium-sized molecules have been routinely found in hospital analytical labs since at least the turn of the millennium. These are typically LC–MS–MS machines, combining the separation facility of liquid chromatography with the precise mass determination of tandem mass spectrometry. Until very recently, two applications formed the mainstay of their work: monitoring the concentrations of immunosuppressant drugs, and of vitamin D and its metabolites in serum or whole blood.

In the clinic

The bioanalytical facility at the University of East Anglia, which serves Norfolk and Norwich University Hospital and takes in contract analyses from a much wider area, is typical of those in large teaching hospitals. Since 2011 it has been run by facility manager Jonathan Tang and senior research fellow John Dutton under the overall direction of Bill Fraser, a professor of medicine. Dutton remembers many of the same routine clinical assays being requested when he began his career as an analytical chemist in the 1970s. In the 40-plus years since, he has seen enormous improvements in speed and throughput of the assays and in the precision of the results that can be obtained. ‘The introduction of LC–MS–MS into our work about 10–15 years ago led to some of the most significant of these improvements,’ he says.

Seen from the perspective of an analytical scientist, however, the new kit has disadvantages as well as advantages. ‘With the latest routine clinical analysers, there can be little to do other than insert cartridges, press buttons and record measurements,’ says Dutton. ‘These analysers are robots, and sometimes the scientists can feel as if they are becoming robots themselves. We keep our junior staff motivated by involving them in research.’

The test we have been developing is selective, specific and fast

Nicole Ball, a recent graduate who has been on a placement in the lab en route to a PhD, is one such junior scientist. Working under Tang’s supervision, she has developed an LC–MS–MS method for detecting and distinguishing between the corticosteroids cortisol and prednisolone in human serum. These are the mainstay of treatment for Addison’s disease, in which the adrenal gland fails to produce enough corticosteroids, and patients’ steroid levels under treatment need constant monitoring. ‘Over 90% of glucocorticoid assays in the UK still use immunoassay techniques, which can be less specific than MS and cannot distinguish between the various molecules,’ says Tang. ‘The test we have been developing can measure both molecules simultaneously, and it is selective, specific and fast.’ Ball adds that she has learned much from her placement that will help with her PhD studies.

The range of assays now available in the UEA facility also includes the detection and measurement of some larger molecules, including collagen breakdown products. ‘Bone tumours cause normal bone cells to degrade, and their collagen breaks down and enters the urine; the concentration of these peptides can be measured there, and these form useful biomarkers for bone tumours,’ says Dutton. He expects that a wider variety of assays for large molecules, including proteins and peptides, will be available in the coming years. Other likely developments in the next few years are further improvements in sensitivity, allowing measurements down to pico- or even femtomolar concentrations, and, of course, even more automation. ‘But automation is a necessary evil,’ he adds.

Entering phase zero

Sensitive measurements of tiny compound concentrations are invaluable in one relatively new type of clinical trial: the so-called ‘investigational new drug’ or Phase 0 study. Typically, clinical evaluation of a potential drug compound begins with a Phase 1 trial in which increasing doses are tested for safety and to establish a likely starting dose for later trials. Phase 1 can only start when the compound’s pharmacokinetics has been established from animal studies, which can sometimes be very difficult: for example, if tests in different species yield very different results. One potential aim of Phase 0 trials is to find out the same information by testing microdoses in human subjects. A typical dose of under 100µg of a small-molecule drug is generally far too low to produce either toxic or therapeutic effects, but its distribution and metabolism can be elucidated using quantitative mass spectrometry.

Companies like to use the phrase “fail fast, fail cheap” but they still don’t like “failing” molecules

Pharma giant GSK has used this approach in an early trial of a candidate drug for malaria, GSK3191607, which led to it being discontinued. ‘In this microdose study, we measured the drug concentrations over time with high precision using the MS-based method of accelerator mass spectrometry (AMS), and this enabled us to estimate its pharmacokinetic parameters,’ explains Malek Okour, GSK Clinical Pharmacology lead of the trial. ‘We found that its elimination half-life was relatively short, and none of the simulated therapeutic dose regimens were expected to give the candidate drug an advantage over similar compounds in more advanced trials or clinical use: the decision to halt its development was therefore a relatively straightforward one.’

Okour and his colleagues used the technique of accelerator mass spectrometry (AMS) to measure the concentration of GSK3191607 in their subjects’ plasma in the clinical trial. This technique involves accelerating molecular ions to an extremely high velocity, therefore giving them a high kinetic energy. The covalent bonds are then broken using a technique known as ‘stripping’, and individual ions separated and detected by single-atom counting. This enables rare atomic isotopes to be separated from abundant neighbouring ones (e.g. 14C from 12C) and then quantified. AMS is most commonly used for radiocarbon dating, but it has many biomedical uses. In this trial, the administered drug was enriched with trace levels of 14C and the concentration of the drug in plasma determined from the 14C concentration measured.

The fate of GSK3191607 was no less useful or important a decision for being a negative one, as GSK’s Graeme Young, director and GSK fellow in translational medicine, explains. ‘Companies like to use the phrase “fail fast, fail cheap” but they still don’t like “failing” molecules. Thinking instead of “finding the truth about a molecule” should lead to improved “go/no go” decisions at earlier stages and cut the cost of drug development by limiting investment in ultimately unsuccessful compounds.’ And as mass spectrometry enters its second century it should become an even more useful tool for this type of decision making, and for many more clinical and pharmaceutical research applications.

Clare Sansom is a science writer based in London, UK

No comments yet