Computers are getting better at modelling chemistry. But there are still many challenges to overcome.

Computational chemistry is evolving rapidly, improving our ability to understand the world. This progress has sparked debate, however, about how we should use it to answer different questions, especially given the competing forces at work.

For example, there is a trade-off between accuracy and speed, which leads to sometimes controversial compromises. Most of these controversies revolve around which approximations are most appropriate for a given type of system – a poor choice could lead to erroneous or misleading results. Another challenge has been the balance between gaining a deep understanding of the fundamental details for specific systems and broader, data-based approaches that can be generalised to different applications. In some cases, this has been perceived as pitting intellectual curiosity against the practical goals of industry.

Yet progress relies on having a dialogue between the communities pursuing method development and those focused on applications. Ideally, the applications should drive method development, encouraging developers to aim toward applications of high interest, while the applications community should exploit the cutting-edge tools with full knowledge of their limitations.

Accuracy versus Speed

Despite growing computer power, computational chemistry is still a compromise between getting an accurate answer and getting a quick answer. Finding the balance often depends on the size of the system – as systems get bigger, approximations that trade accuracy for speed must be introduced.

Small molecules can be treated rigorously using high-level quantum mechanical wave function methods to obtain quantitatively accurate geometries and relative energies. That quickly becomes too burdensome for larger molecules, so a less computationally demanding approach such as density functional theory (DFT) must be used. DFT is based on rigorous theory and is exact in principle, but it relies on approximating a quantity called the electron exchange–correlation functional. Typically this approximation involves using parameters fitted to energies and sometimes geometries of molecules in data sets. As a result, each functional tends to work well for systems similar to those in the data sets. Discovering a functional that works for all types of molecules would be a significant step. An alternative approach is to develop wave function-based methods that are computationally tractable for larger systems.

We are never fully satisfied – we will always expand our goals to the limits of computing power

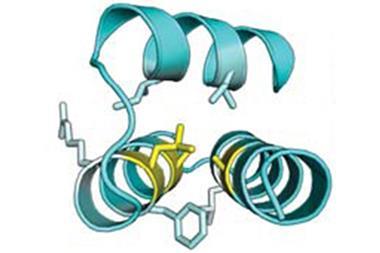

Moving up in scale to proteins, membranes and even entire cells, a similar compromise must be made.1 These molecules have a much larger conformational space, and trying to find the most stable states on a large potential energy surface introduces competition between the accuracy of the surface and how extensively we explore that conformational space. Smaller biomolecules can be treated with methods such as DFT, but larger biological systems are typically treated with more approximate molecular mechanical force fields. For even larger systems, coarse graining is required, moving from an atomic-level description to considering groups of atoms or molecules and, in some cases, a continuum treatment of certain parts of the system.

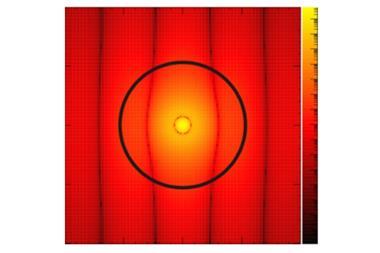

A similar hierarchy exists in simulating complex materials. Solids and surfaces can be treated with DFT methods exploiting periodicity. Simulating solid–liquid interfaces often requires explicitly treating solvent molecules and ions, as well as accounting for the effects of the applied potential and associated electric field for electrochemical processes. In some cases, microkinetic modeling is used to include effects such as flow and diffusion in conjunction with the relevant chemical reactions.

Depth versus breadth

Then there are the debates about the types of answers we strive to obtain. The methods described above can be used to elucidate detailed mechanisms of specific reactions, helping to both interpret experimental data and to make testable predictions. For example, a recent study predicted a ~300 mV shift in the redox potential associated with a proton-coupled electron transfer reaction in a benzimidazole–phenol dyad2 when the benzimidazole is amino-substituted. The molecules were synthesised and experiments validated the predictions. These predictive studies are gradually increasing the chemistry community’s trust of theory. Moreover, these calculations generate design principles for developing molecular, biological, or materials systems with specified properties.3

An alternative approach is to generate and analyse large quantities of data. Typically this approach is less concerned with understanding fundamental physical principles, but rather focuses on generating practical results. For example, virtual screening can be used in conjunction with specified descriptors to design systems with desired properties. Machine learning is another powerful tool that can be used to design systems based on pattern recognition. For both approaches, success depends on the choice of descriptors, and a mechanistic interpretation with multiple descriptors is challenging. In the field of biology, bioinformatics has been used to design proteins using information contained in the protein database.

A computational chemist must balance all of these considerations: the questions being posed, the level of accuracy, the size of the system, and the computational resources available.

As more computer power becomes available, we can target larger systems with greater accuracy. This moving target means we are never fully satisfied – we will always expand our goals to push the limits of the available computer power. As the field evolves, ambitious applications will inspire innovative methodological advances, which in turn will enable applications that push the frontier forward.

Sharon Hammes-Schiffer is professor of chemistry at the University of Illinois, Urbana-Champaign in the US

References

1 T M Earnest et al., Biopolymers, 2016 105, 735 (DOI: 10.1002/bip.22892)

2 M T Huynh et al., ACS Cent. Sci., 2017, 3, 372 (DOI: 10.1021/acscentsci.7b00125)

3 S Hammes-Schiffer, Acc. Chem. Res., 2017, 50, 561 (DOI: 10.1021/acs.accounts.6b00555)

No comments yet