Molecular dynamics is at the point of simulating bulk matter – but don’t expect it to predict the future

The TV series Devs took as its premise the idea that a quantum computer of sufficient power could simulate the world so completely that it could project events accurately back into the distant past (the Crucifixion or prehistory) and predict the future. At face value somewhat absurd, the scenario supplied a framework on which to hang questions about determinism and free will (and less happily, the Many Worlds interpretation of quantum mechanics).

Quite what quantum computers will do for molecular simulations remains to be seen, but the excitement about them shouldn’t eclipse the staggering advances still being made in classical simulation. Full ab initio quantum-chemical calculations are very computationally expensive even with the inevitable approximations they entail, so it has been challenging to bring this degree of precision to traditional molecular dynamics, where molecular interactions are still typically described by classical potentials. Even simulating pure water, where accurate modelling of hydrogen bonding and the ionic disassociation of molecules involves quantum effects, has been tough.

Now a team that includes Linfeng Zhang and Roberto Car of Princeton University, US, has conducted ab initiomolecular dynamics simulations for up to 100 million atoms, probing timescales up to a few nanoseconds.1 Sure, it’s a long way from the Devs fantasy of an exact replica of reality. But it suggests that simulations with quantum precision are reaching the stage where we can talk not in terms of handfuls of molecules but of bulk matter.

Training and learning

How do they do it? The trick, which researchers have been exploring for several years now, is to replace quantum-chemical calculations with machine learning (ML). The general strategy of ML is that an algorithm learns to solve a complex problem by being trained with many examples for which the answers are already known, from which it deduces the general ‘shape’ of solutions in some high-dimensional space. It then uses that shape to interpolate for examples that it hasn’t seen before. The familiar example is image interpretation: the ML system works out what to look for in photos of cats, so that it can then spot which new images have cats in them. It can work remarkably well – so long as it is not presented with cases that lie far outside the bounds of the training set.

The approach is being widely used in molecular and materials science, for example to predict crystal structures from elemental composition,2-3 or electronic structure from crystal structure.4-5 In the latter case, bulk electronic properties such as band gaps have traditionally been calculated using density functional theory (DFT), an approximate way to solve the quantum-mechanical equations of many-body systems. Here the spatial distribution of electron density is computationally iterated from some initial guess until it fits the equations in a self-consistent way. But it’s computationally intensive, and ML circumvents the calculations by figuring out from known cases what kind of electron distribution a given configuration of atoms will have.

The approach can in principle be used for molecular dynamics by recalculating the electron densities at each time step. Zhang and colleagues have now shown how far this idea can be pushed using supercomputing technology, clever algorithms, and state-of-the-art artificial intelligence.6 They present results for simulations of up to 113 million atoms for the test case of a block of copper atoms, enabling something approaching a prediction of bulk-like mechanical behaviour from quantum chemistry. Their simulations of liquid water, meanwhile, contain up to 12.6 million atoms.

Indistinguishable but faster

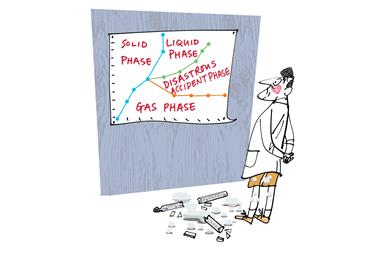

For small systems where the comparison to full quantum DFT calculations can be made, the researchers find electron distributions essentially indistinguishable from the full calculations, while gaining 4–5 orders of magnitude in speed. Their system can capture the full phase diagram of water over a wide range of temperature and pressure, and can simulate processes such as ice nucleation. In some situations water can be ‘coarse-grained’ such that hydrogen bonding can still be modelled without including the hydrogen atoms explicitly.7 The researchers say it should be possible soon to follow such processes on timescales approaching microseconds for about a million water molecules, enabling them to look at processes such as droplet and ice formation in the atmosphere.

For small systems where the comparison to full quantum DFT calculations can be made, the researchers find electron distributions essentially indistinguishable from the full calculations, while gaining 4–5 orders of magnitude in speed. Their system can capture the full phase diagram of water over a wide range of temperature and pressure, and can simulate processes such as ice nucleation. The researchers say it should be possible soon to follow such processes on timescales approaching microseconds for about a million water molecules, enabling them to look at processes such as droplet and ice formation in the atmosphere.

Both of these test cases are helped by being relatively homogeneous, involving largely identical atoms or molecules. Still, the prospects of this deep-learning approach look good for studying much more heterogeneous systems such as complex alloys.8 One very attractive goal is, of course, biomolecular systems, where the ability to model fully solvated proteins, membranes and other cell components could help us understand complex mesoscale cell processes and predict the behaviour of drug candidates. One challenge here is how to include long-range interactions such as electrostatic forces.

It’s a long way from Devs -style simulations of minds and histories, which will perhaps only ever be fantasies. But one scene in that series showed what might be a more tractable goal: the simulation of a growing snowflake. What a wonderful way that would be to advertise the simulator’s art.

References

1. Jia et al., arXiv, 2020http://www.arxiv.org/abs/2005.00223 (submitted, ACM, New York, 2020)

2 C C Fischer et al, Nat. Mater., 2006, 5, 641 (DOI: 10.1038/nmat1691)

3 N Mounet et al, Nat. Nanotechnol., 2018, 13, 246 (DOI: 10.1038/s41565-017-0035-5)

4 Y Dong et al, npj Comput. Mater., 2019,5, 26 (DOI: 10.1038/s41524-019-0165-4)

5 A Chandrasekaran et al, npj Comput. Mater., 2019, 5, 22 (DOI: 10.1038/s41524-019-0162-7)

6 L Zhang et al, Phys. Rev. Lett., 2018, 120, 143001 (DOI: 10.1103/PhysRevLett.120.143001)

7. L Zhang et al, J. Chem. Phys., 2018,149, 034101 (DOI: 10.1063/1.5027645)

8. F-Z Dai et al., J. Mater. Sci. Technol., 2020,43, 168 (DOI: 10.1016/j.jmst.2020.01.005)

1 Reader's comment