‘It feels like, to me, that they approached machine learning like using a hammer to solve a problem,’ says machine learning expert Siddha Ganju, who works on self-driving car technology at US company Nvidia. Ganju is referring to an algorithm designed to predict reaction outcomes1 – a study now disputed by another research team who says it fails classical controls in machine learning.2

Although the study’s authors disagree with this assessment,3 the controversy highlights the potential pitfalls of machine learning methods as they gain traction. A decade ago, there were only a few hundred chemistry machine learning publications. In 2018, almost 8000 articles in Web of Science’s chemistry collection contained the keyword.

Learning algorithms promise to overhaul drug discovery, synthesis and materials science. ‘But since more and more chemists come to the field [of machine learning], unfortunately, sometimes best practices aren’t followed,’ says Olexandr Isayev, chemist and machine learning expert from University of North Carolina at Chapel Hill, US.

Chemical patterns

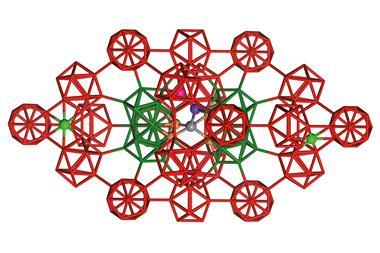

In February 2018, a team around Abigail Doyle from Princeton University, US, and Spencer Dreher from Merck & Co created a machine learning model to predict yields of cross coupling reactions spiked with isoxazoles – heterocycles known to inhibit the reaction.

The researchers had fed their algorithm with yields and reagent parameters – orbital energies, dipole moments and NMR shifts among many others – of 3000 reactions. The model could predict, with high accuracy, yields of reactions it hadn’t come across yet.

There is this misconception about machine learning that it’s a black box. That’s not true

Michael Keiser, University of California, San Francisco

However, Kangway Chuang and Michael Keiser from University of California, San Francisco, US, felt Doyle’s team had failed to run sufficient control experiments. When Keiser and Chuang trained an identical algorithm on nonsense data – random barcodes instead of chemical parameters – it was almost as good at predicting yields as Doyle’s. ‘How on earth would a model that doesn’t even get to see chemical features predict reaction yields?’ Keiser wonders. ‘Any model that succeeds isn’t using chemistry.’

The problem, Keiser explains, could stem from a lack of variety in the datasets used to train and test the model. ‘[It’s] pernicious, because it’s subtle and easy to miss: maybe the chemical patterns you see are really not that varied,’ Keiser says. When training and test sets look too similar, explains Ganju, it inflates the model’s accuracy.

‘If there is a way for a machine learning model to find patterns, to cheat and get good performance in ways that we as researchers don’t even think about – it’ll do it,’ says Keiser. There is even a list of such cheating algorithms, put together by Victoria Krakovna from DeepMind. It includes the ‘indolent cannibals’ that emerged from an artificial life simulation.

In the simulation, eating provides energy, moving costs energy and reproducing is energy neutral. The algorithm maximised its energy gain by evolving a sedentary lifestyle that consisted mostly of mating to produce offspring that served as a food source.

Simpler is better

‘In chemistry, the price per data point is high – you need to have new compounds, do new reactions,’ says Isayev. This is why chemical datasets tend to be small, which is a problem for complex algorithms, which are prone to overfitting.

Models trained on only a few thousand reactions might find not only useful trends but also patterns in underlying noise. Overfitting makes models overconfident and artificially inflates their performance. Ganju recommends sticking to Occam’s razor: ‘Use the simplest possible algorithm or model that helps in solving the problem.’

Small datasets also tend to be biased – they contain too many similar structures, says Isayev. A model trained on such data might do well, ‘but in reality, all [it] does is learn about this particular scaffold’, he points out. ‘It sound really boring, but data is the key,’ Isayev adds. Curating data requires a certain finesse. ‘As with any simulation, the principle “garbage in–garbage out” applies,’ he says.

‘It’s an outstanding challenge,’ agrees Doyle. ‘How do you know in advance of experimentation what conditions to include as a training set for a model in order to maximise predictive ability out-of-sample?’ In their rebuttal, Doyle, Dreher and colleagues argue that their machine learning model is valid despite Keiser’s attempts to prove otherwise. The randomised model, Doyle explains, can’t make predictions for compounds that weren’t part of its training data.

Jesús Estrada, a PhD researcher in Doyle’s group, says that to disprove the model’s predictive abilities, it’s not enough to do an experiment where the test and training set comprise only components of average reactivity. ‘You want to use a test set that has more extreme outcomes, because that’s what the [random] models can’t predict.’

‘It’s very hard to say who is correct – the truth is probably somewhere in between,’ says Isayev. ‘Maybe the authors weren’t careful in applying certain practices, but it doesn’t discount the fact that you can use machine learning to predict reaction outcomes.’

Overcoming the hype

Although there is no final consensus on the algorithm’s validity, Isayev points out that the discussion is important to help others avoid similar stumbling blocks. ‘There is this misconception about machine learning that it’s a black box. That’s not true,’ Keiser says. The principles of scientific enquiry – testing multiple hypotheses and checking if results align with intuition – should be applied to machine learning as they are to any other type of research.

Rather than expecting quick results, ‘I think the use of machine learning or any computational technique is to reduce search space, which allows scientists a more focused approach – the relevance of domain expertise cannot be ruled out’, says Ganju. ‘Machine learning and AI make for great headlines but sometimes you have to glimpse beyond them to see that the actual results aren’t as earth-shattering.’

References

1 D T Ahneman et al, Science, 2018, 360, 186 (DOI: 10.1126/science.aar5169)

2 K V Chuang and M J Keiser, Science, 2018, 362, eaat8603 (DOI: 10.1126/science.aat8603)

3 J G Estrada et al, Science, 2018, 362, eaat8763 (DOI: 10.1126/science.aat8763)

No comments yet